When I built the MVP for Algorion AI, we predicted 48 stocks.

It was fast. It was easy. I thought scaling would just be a for loop.

I was wrong.

The Naive Beginning

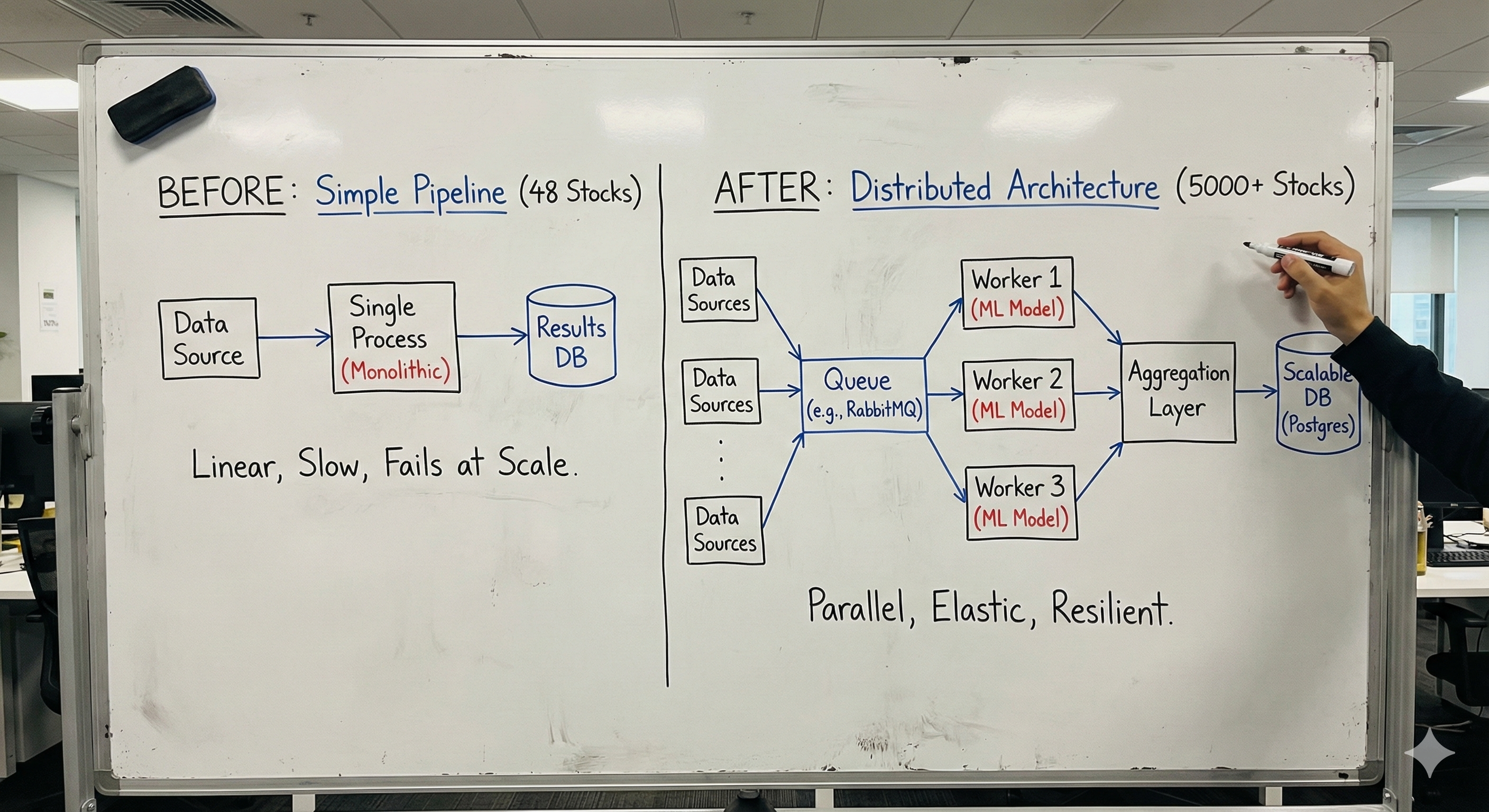

The first version of our stock prediction system was beautifully simple. We had 48 carefully selected stocks—India's blue-chip companies. The pipeline looked something like this:

# The "it works on my machine" version

for stock in stocks: # 48 stocks

data = fetch_data(stock)

prediction = model.predict(data)

save_result(stock, prediction)

It ran in under 5 minutes. Memory usage was negligible. Life was good.

Then the business requirement changed: We need to cover the entire NSE and BSE.

That meant going from 48 stocks to 5,000+ instruments.

I underestimated the beast.

The 100x Challenge

Let me break down the math that humbled me:

| Metric | MVP (48 stocks) | Production (5,000+ stocks) | |--------|-----------------|---------------------------| | Data Volume | ~2 MB/day | ~200 MB/day | | API Calls | 48 calls | 5,000+ calls | | Model Inference | 48 predictions | 5,000+ predictions | | Memory Footprint | ~500 MB | 💀 |

The first time I tried to run the "scaled" version, here's what happened:

- RAM spiked to 32GB and the process got killed

- The data API rate-limited us after 200 requests

- A single run took 4+ hours (we needed it done in 30 minutes)

- Three models crashed due to out-of-memory errors

The naive approach wasn't just slow—it was impossible.

The Architecture Shift

This is where things got interesting. And challenging.

I'm currently doing what I call an "AI Detox" with our Velocity Engine project—relying on logic and first-principles thinking instead of just asking AI to fix architecture problems. This scaling challenge was the perfect test.

Problem #1: Data Fetching

You can't make 5,000 synchronous API calls and expect everything to be fine.

Before: The Naive Approach

# Synchronous disaster

def fetch_all_data(stocks):

results = []

for stock in stocks:

data = api.get_stock_data(stock) # Blocks for ~200ms each

results.append(data)

return results

# Time: 5000 × 200ms = 1,000 seconds = 16+ minutes just for fetching

After: Batched Async Processing

import asyncio

from asyncio import Semaphore

async def fetch_all_data(stocks, batch_size=50, rate_limit=10):

semaphore = Semaphore(rate_limit) # Respect API limits

async def fetch_one(stock):

async with semaphore:

return await api.get_stock_data_async(stock)

# Process in batches to manage memory

results = []

for i in range(0, len(stocks), batch_size):

batch = stocks[i:i + batch_size]

batch_results = await asyncio.gather(*[fetch_one(s) for s in batch])

results.extend(batch_results)

await asyncio.sleep(0.5) # Breathing room for the API

return results

# Time: ~2 minutes for 5000 stocks

Key insight: Async isn't magic. Without the semaphore and batching, we'd still get rate-limited. The trick is controlled concurrency.

Problem #2: Model Management

Here's a confession: I initially thought we'd need 300+ individual models—one fine-tuned for each sector and stock type.

That's insane.

The Reality Check:

- Loading 300 models into memory = ~60GB RAM

- Model switching overhead = death by a thousand context switches

- Maintenance nightmare = impossible to update

The Solution: Hierarchical Model Architecture

# Instead of 300 models, we use 3 tiers

class PredictionEngine:

def __init__(self):

# Tier 1: Base model (handles 80% of cases)

self.base_model = load_model("base_predictor.pt")

# Tier 2: Sector specialists (loaded on demand)

self.sector_models = {} # Lazy loading

# Tier 3: High-volatility handler

self.volatility_model = load_model("volatility_specialist.pt")

def predict(self, stock_data):

volatility = calculate_volatility(stock_data)

if volatility > THRESHOLD:

return self.volatility_model.predict(stock_data)

sector = stock_data.sector

if sector in HIGH_COMPLEXITY_SECTORS:

if sector not in self.sector_models:

self.sector_models[sector] = load_model(f"{sector}_model.pt")

return self.sector_models[sector].predict(stock_data)

return self.base_model.predict(stock_data)

Result:

- Memory usage: 60GB → 4GB

- Model count in memory: 300 → 3-5 at any time

- Accuracy: Actually improved (the base model generalized better)

Problem #3: The Pipeline Itself

Individual optimizations weren't enough. The entire architecture needed a rethink.

Before: Monolithic Script

[Fetch Data] → [Process] → [Predict] → [Save]

↓ ↓ ↓ ↓

(sync) (sync) (sync) (sync)

After: Distributed Pipeline

[Data Fetcher] → [Queue] → [Workers] → [Aggregator]

(async) Redis (parallel) (batch save)

↓ ↓ ↓ ↓

5 mins Buffer 5 workers 1 bulk write

We moved from a script to a system. Each component could fail independently, retry gracefully, and scale horizontally.

The Business Impact

This wasn't just a technical exercise. The scaling directly translated to user value:

- Before: Users could analyze 48 pre-selected stocks

- After: Users can screen the entire Indian market—5,000+ instruments

That's the difference between a toy and a product.

Our prediction pipeline now runs in under 25 minutes for the full market, compared to the 4+ hours (or crashes) we started with.

What I Learned

Building this system taught me lessons that no tutorial ever covered:

1. Code that works for 50 items rarely works for 5,000

The naive for loop is fine for prototypes. Production demands different thinking—batching, streaming, lazy loading, and graceful degradation.

2. Optimization isn't an afterthought—it's a requirement

I used to think: "Let's make it work first, then make it fast."

Now I think: "Let's understand the scale first, then design for it."

3. Constraints breed creativity

We couldn't throw more hardware at the problem (startup budget). That constraint forced us to think smarter—and the resulting architecture is more elegant than any "just scale vertically" solution.

4. AI can't architect for you

During my AI Detox, I realized that while AI tools are great for writing boilerplate, they struggle with system-level thinking. The architecture decisions came from understanding the problem deeply, not from prompts.

The Journey Continues

This is just one chapter in building production ML systems at scale. There's more to share:

- How we handle model drift detection across 5,000 instruments

- The monitoring stack that keeps us sane

- Why we chose Redis over Kafka (and when we'll switch)

I'm documenting this entire journey at buildwithali.tech. If you're building ML systems that need to actually work in production, follow along—I break things so you don't have to.

Questions about scaling ML systems? Reach out at hello@buildwithali.tech or connect on LinkedIn.